Tokenization Explained with Code: BPE vs WordPiece vs SentencePiece

Tokenization

If neural networks understand numbers, how do they understand text?

The answer lies in tokenization — one of the most overlooked yet critical components of modern NLP systems.

In this blog, we’ll break down how tokenization works, why subword tokenization became the standard, and compare BPE, WordPiece, and SentencePiece with intuition and code.

1. Why Tokenization Exists

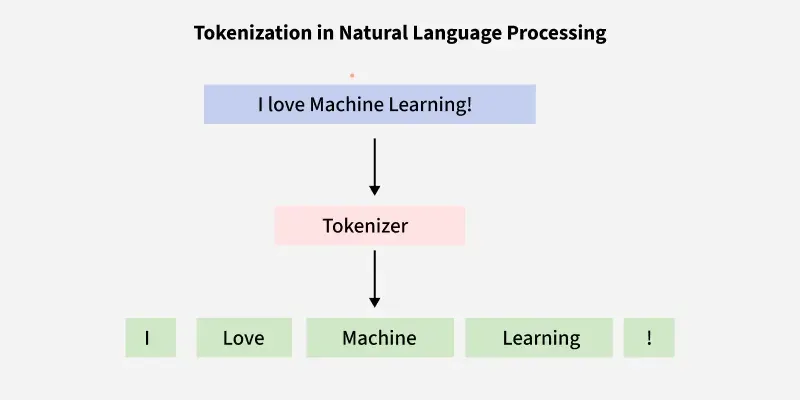

Neural networks cannot process raw text. They operate on numerical inputs.

So text must be converted into:

Text → Tokens → Token IDs → Embeddings

A naive approach would be word-level tokenization:

"I love machine learning" → ["I", "love", "machine", "learning"]

🚫 Problems with Word-Level Tokenization

- Huge vocabulary size

- Out-of-vocabulary (OOV) words

- Cannot handle rare words, typos, or new terms

This led to subword tokenization.

2. Subword Tokenization: The Core Idea

Instead of treating each word as atomic, we split words into smaller units:

"unhappiness" → ["un", "happi", "ness"]

Why this works:

- Common words remain whole

- Rare words are decomposed

- Vocabulary size stays manageable

- Model can generalize better

The three most popular subword methods are:

- BPE (Byte Pair Encoding)

- WordPiece

- SentencePiece

3. Byte Pair Encoding (BPE)

🧠 Intuition

BPE starts with characters and repeatedly merges the most frequent pair.

🔁 Algorithm

- Initialize vocabulary with characters

- Count most frequent adjacent pairs

- Merge the most frequent pair

- Repeat until vocab size is reached

✨ Example

Corpus:

low, lower, newest, widest

After merges:

low → low

lower → low + er

newest → new + est

💻 BPE with HuggingFace Tokenizers

from tokenizers import Tokenizer

from tokenizers.models import BPE

from tokenizers.trainers import BpeTrainer

from tokenizers.pre_tokenizers import Whitespace

# Initialize tokenizer

tokenizer = Tokenizer(BPE())

tokenizer.pre_tokenizer = Whitespace()

trainer = BpeTrainer(vocab_size=1000)

tokenizer.train(files=["corpus.txt"], trainer=trainer)

output = tokenizer.encode("Tokenization is powerful")

print(output.tokens)

✅ Used In

- GPT-2

- GPT-3 (byte-level BPE)

4. WordPiece

🧠 Intuition

WordPiece is similar to BPE but optimizes for likelihood rather than frequency.

Instead of merging the most frequent pair, it merges the pair that maximizes training data likelihood.

🔑 Special Feature

- Uses

##prefix to denote subwords

playing → play + ##ing

💻 WordPiece in Transformers

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained("bert-base-uncased")

text = "Tokenization matters a lot"

output = tokenizer.tokenize(text)

print(output)

Example Output

['token', '##ization', 'matters', 'a', 'lot']

✅ Used In

- BERT

- DistilBERT

- Electra

5. SentencePiece

🧠 Intuition

SentencePiece treats text as a raw stream, not words.

🚫 No whitespace assumptions

This makes it language-agnostic.

🔥 Why SentencePiece is Powerful

- Works with languages without spaces (Chinese, Japanese)

- Handles noisy text better

- Uses special token

▁to represent spaces

"I love NLP" → ['▁I', '▁love', '▁NLP']

💻 SentencePiece Example

import sentencepiece as spm

spm.SentencePieceTrainer.train(

input='corpus.txt',

model_prefix='spm',

vocab_size=1000

)

sp = spm.SentencePieceProcessor()

sp.load('spm.model')

print(sp.encode("Tokenization is cool", out_type=str))

✅ Used In

- T5

- ALBERT

- LLaMA

6. Key Differences at a Glance

| Feature | BPE | WordPiece | SentencePiece |

|---|---|---|---|

| Uses frequency | ✅ | ❌ | ✅ |

| Likelihood-based | ❌ | ✅ | ❌ |

| Space-aware | ✅ | ✅ | ❌ |

| Language-agnostic | ❌ | ❌ | ✅ |

| Used in | GPT | BERT | LLaMA, T5 |

7. Which Tokenizer Should You Use?

Use BPE if:

- You’re building a GPT-like model

- You want simplicity and speed

Use WordPiece if:

- You want BERT-style masked LM

- You care about likelihood-based merges

Use SentencePiece if:

- You’re working with multilingual data

- You want whitespace-independent tokenization

8. Why Tokenization Matters More Than You Think

Tokenization affects:

- Context length

- Memory usage

- Training stability

- Inference speed

Bad tokenization can cripple model performance even with a strong architecture.

9. Final Thoughts

Tokenization is not just a preprocessing step — it’s a design decision.

Understanding how BPE, WordPiece, and SentencePiece work gives you an edge when:

- Fine-tuning LLMs

- Building RAG systems

- Designing multilingual models

Written by Jatin Chopra — AI Engineer & Technical Writer

📬 Want to connect or collaborate? Head over to the Contact page or find me on GitHub or LinkedIn